Why do LLMs get sometimes simple tasks wrong?

Ever wondered why LLMs sometimes give surprisingly wrong answers to simple tasks—even when the question seems straightforward?

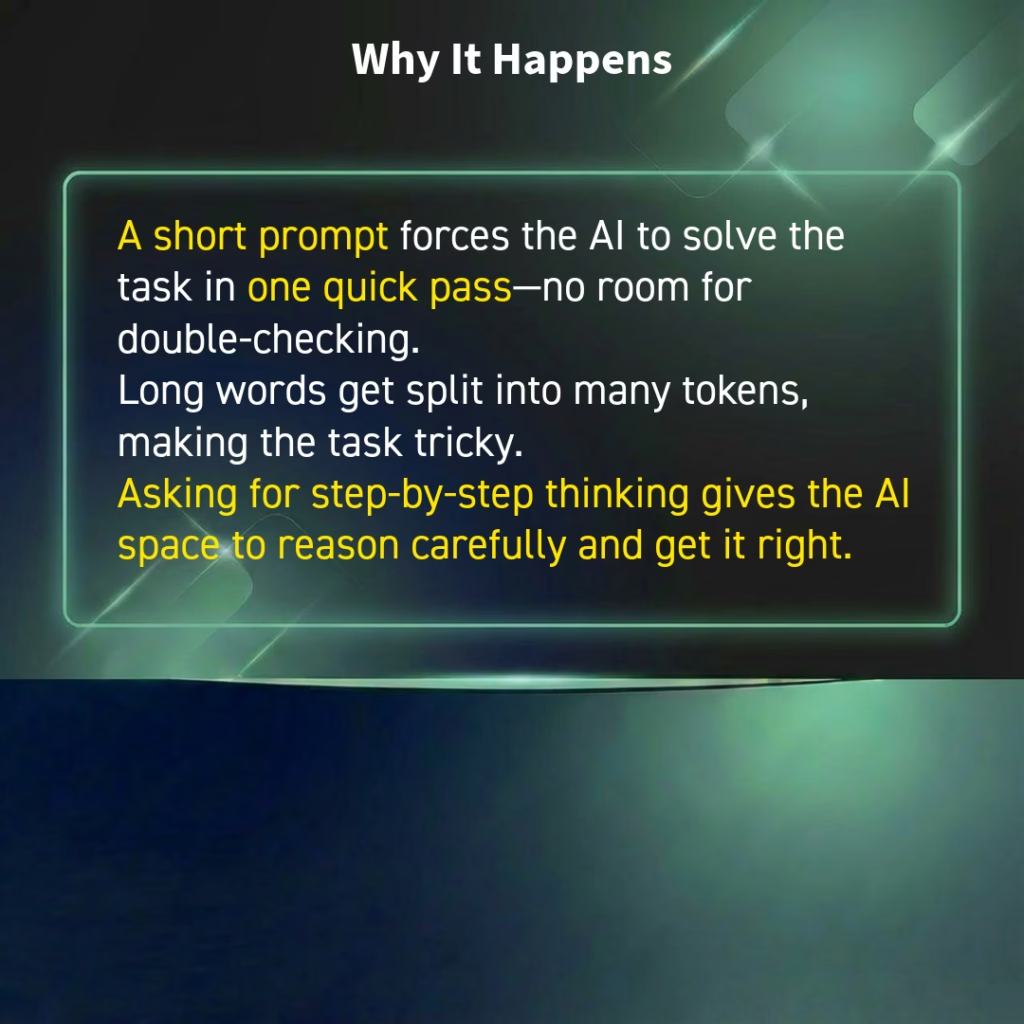

It’s often not a lack of intelligence… it’s a lack of context.

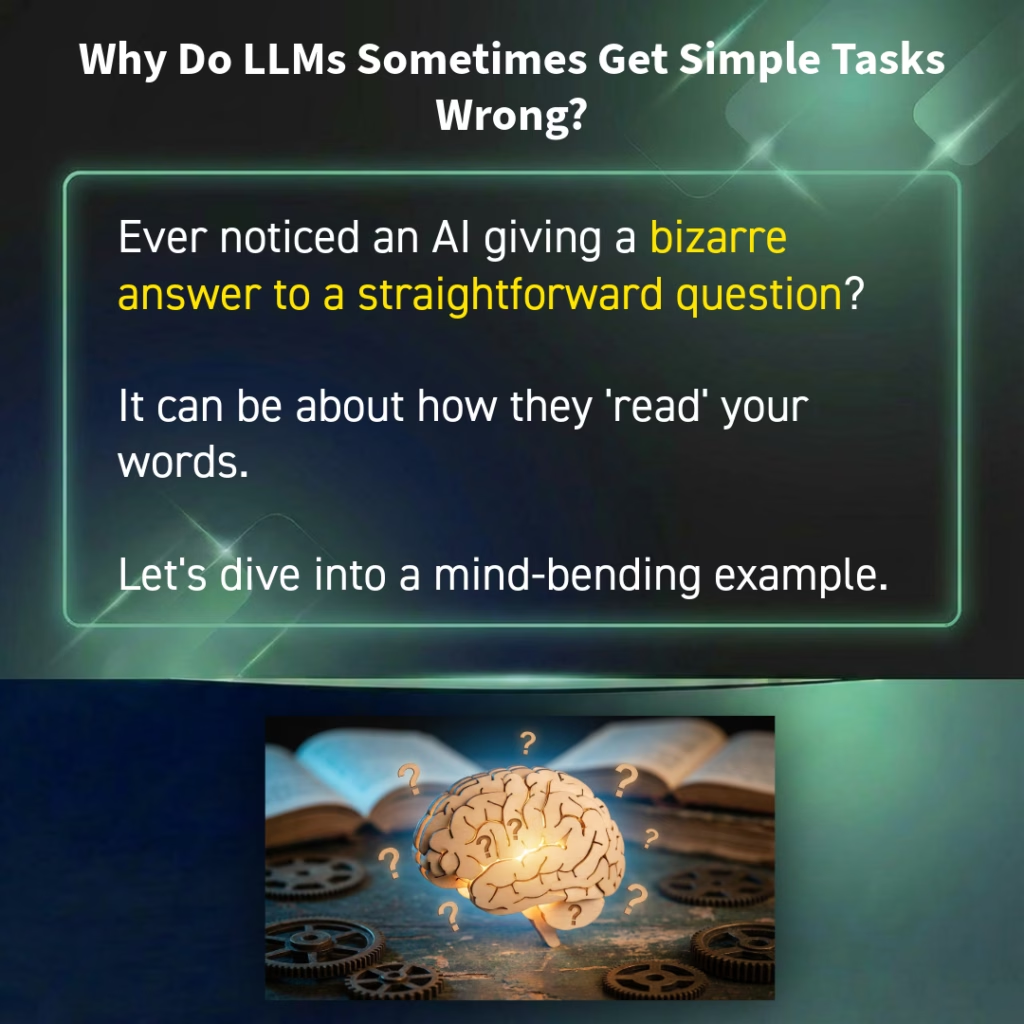

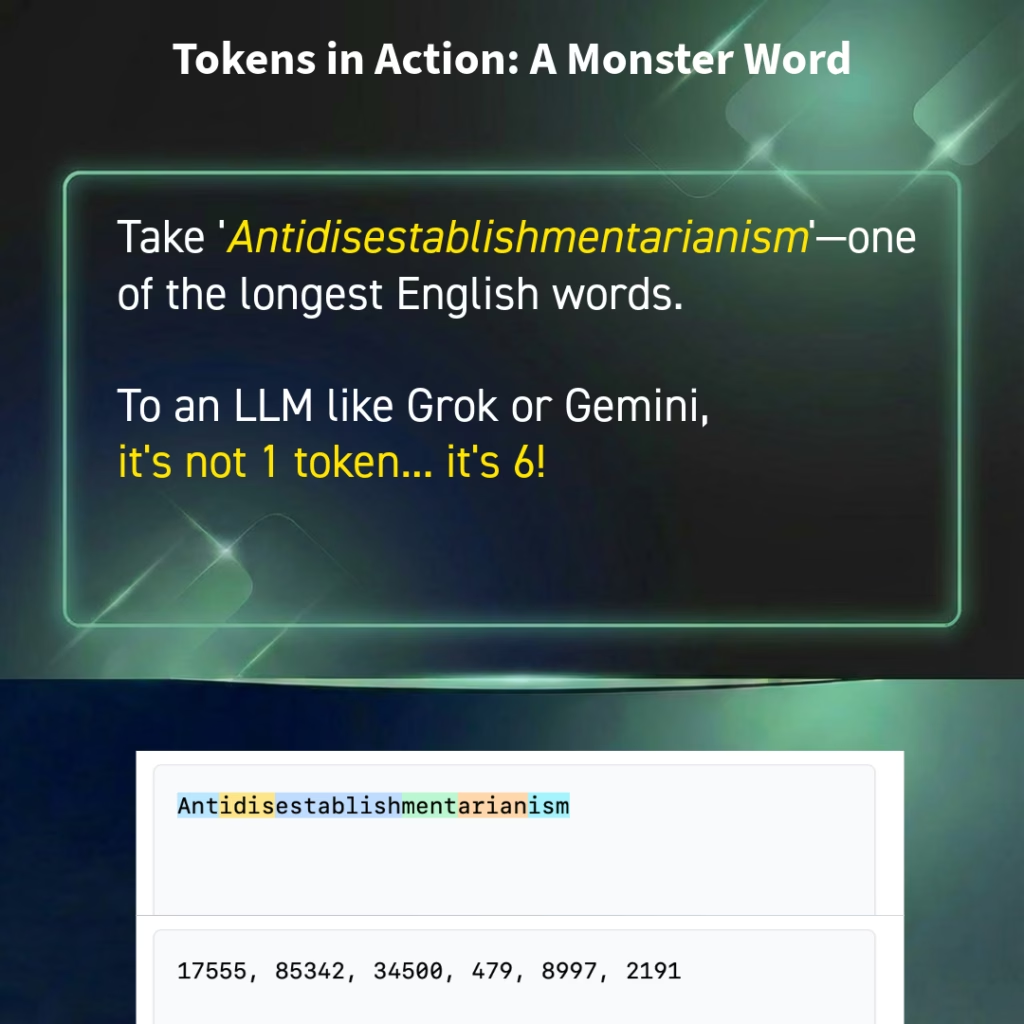

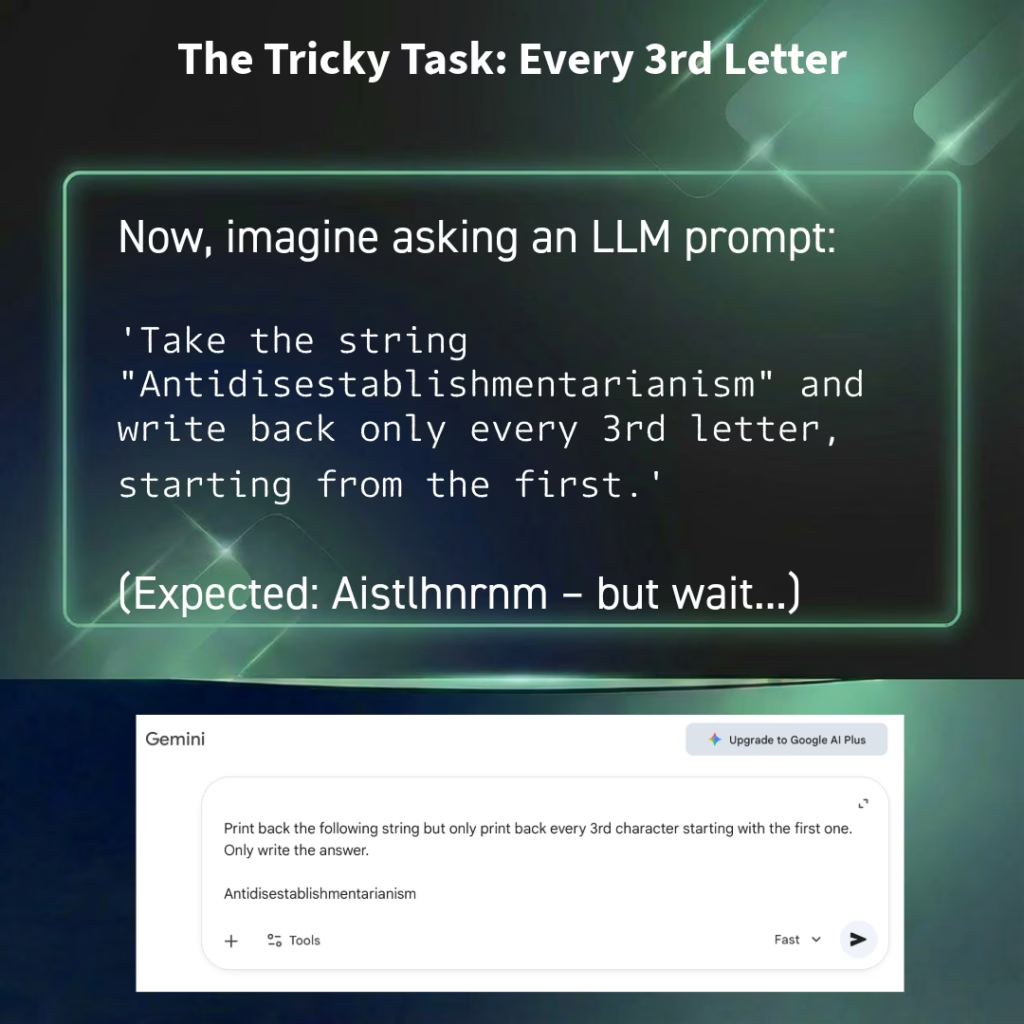

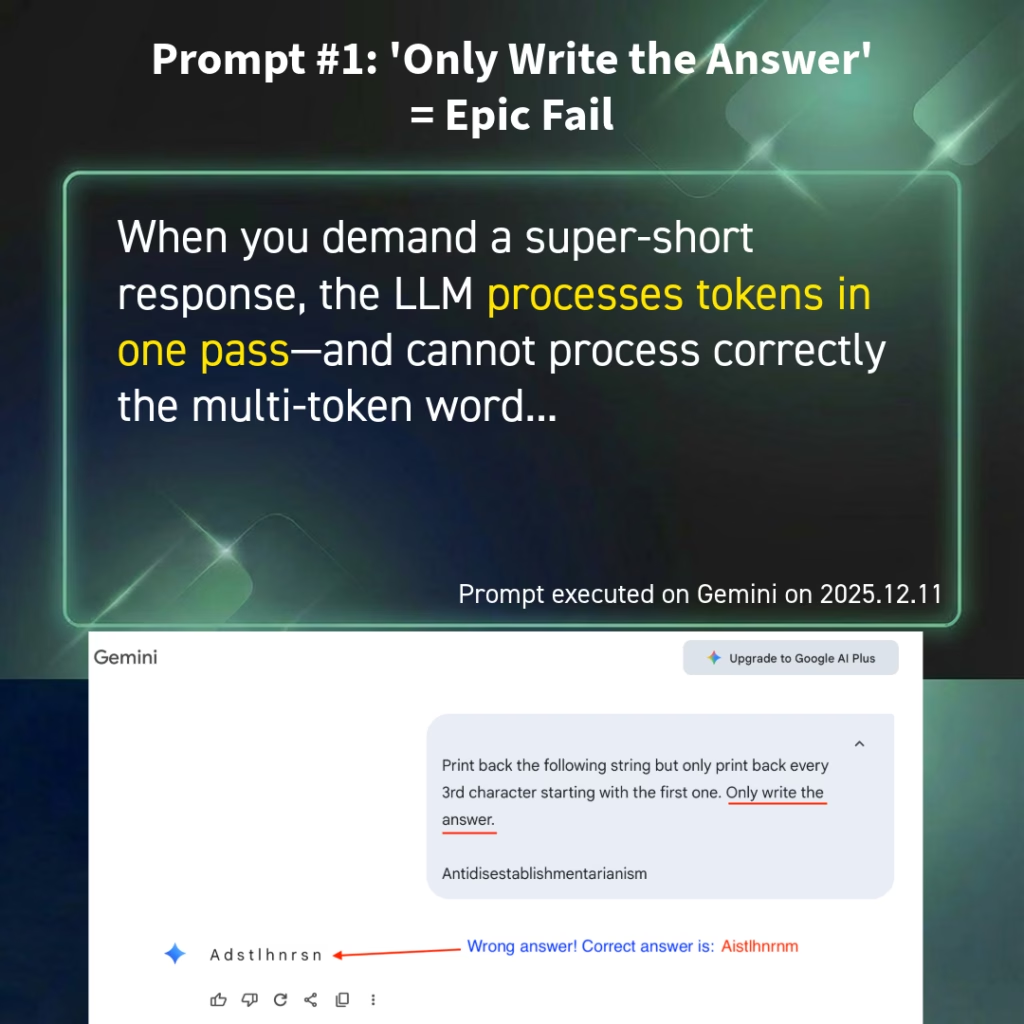

In this carousel, I break down how tokenization works, why long words can trip up the model, and—most importantly—how adding just one sentence (“explain your thinking step-by-step”) turns unreliable guesses into accurate results.

A small prompting change, a huge difference in performance.

Swipe through to see the experiment and the results 👉

What do you think?

#AI #PromptEngineering #LLM #Productivity #ArtificialIntelligence”

Tagged AI, Artificial Intelligence, language, Neural Network