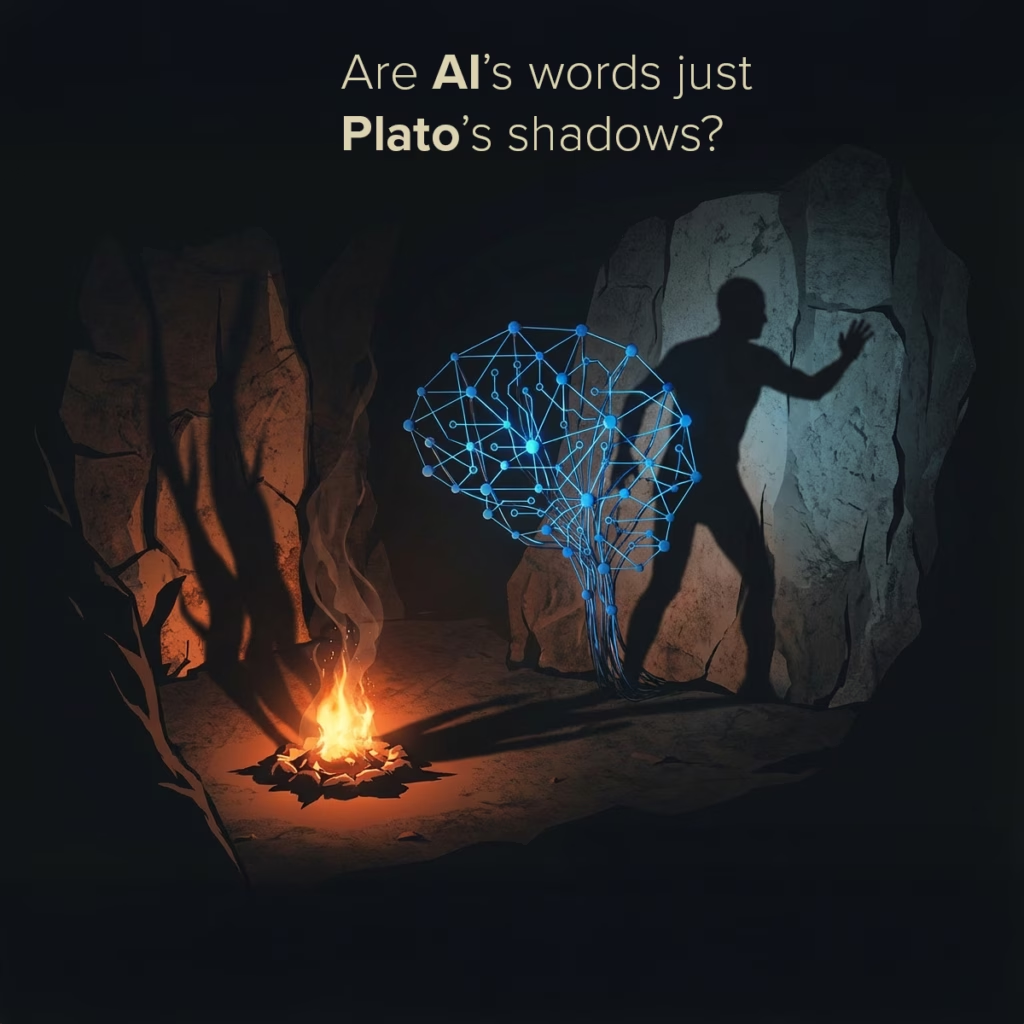

Are AI’s answers reality — or just Plato’s shadows on the cave wall?

We often forget that these systems do not see the world.

They do not observe, experience, or understand anything.

They predict text — nothing more — and the fluency creates an illusion of depth.

But when we read fluent language, our brain fills in the missing pieces:

-

we project intention,

-

we infer reasoning,

-

we attribute understanding.

That projection is powerful — and misleading.

It transforms statistical shadows into the appearance of intelligence.

This confusion sits at the heart of many AI debates today:

-

we expect explanation where there is only correlation

-

we expect judgment where there is only pattern-matching

-

we expect truth where there is only probability

In The Hunt for Intelligence, I argue that the danger is not the machine.

It’s the illusion, the humanity we cannot refrain from projecting because we are fooled and love it.

The ease with which we mistake a well-formed shadow for a mind.

If we want to use these systems well — in business, in research, in daily decisions — the first step is to see the shadows for what they are.

Clear thinking beats the cave.